Space Orbs

“Space Orbs” is a Virtual Reality puzzler.

PROJECT INFORMATION

Date: 8th semester (2017)

Duration: 8 weeks

Team Size: 1 person

Technology: Oculus Rift, Unreal Engine 4, Blueprint Visual Scripting, Autodesk Maya, Adobe Photoshop, Ableton

Live

Constraints: Develop a fully playable VR prototype with emphasis on motion controls.

Gameplay Video

Oculus

Rift Headset Rev 2.0

Oculus Rift Headset Rev 2.0

Introduction

This project is a resemblance of what I have learned and achieved over the course of the last three and a half years, and I am aiming to synergize the knowledge I have gained from my studies with the experience I have gained from work.

Oculus

Rift Motion Controllers

Oculus Rift Motion Controllers

Motivation

I am interested in virtual reality and mixed reality. I believe that these technologies will shape the future of the media landscape. The technology is somewhat new but leaves us developer a lot of room to explore. I am very interested and curious about its potential and how it can be utilized. I am sure, that we only see the tip of the iceberg and that we are still in the process of comprehending its possibilities.

Oculus

Rift Motion Tracker

Oculus Rift Motion Tracker

Goals

My goal is to create a virtual reality game, which is not only appealing in its design but is also set up in a way, that the content is modular and easily expandable. For that matter, it is essential to carefully plan and set up a clean foundation for the framework. I hope that this will result in high stability and great expandability of the game, as well as a solid foundation for further development. I believe, that if the foundation is set up correctly, content generation can be very efficient. With this belief, I am aiming to create content which allows for roughly thirty minutes of playtime.

Technology

I have been playing around with the HTC Vive and the Oculus Rift. While the HTC Vive is superior in image quality and device tracking across a whole room, the Oculus Rift is superior in comfort and setup. Although I like the touchpad of HTC Vive’s motion controllers, I prefer the ones from Oculus Rift due to its more ergonomic design.

Looking at my game, all advantages of the HTC Vive would not have any significant impact, and I, therefore, preferred to choose the one which is sufficiently tailored towards my needs.

Work Process

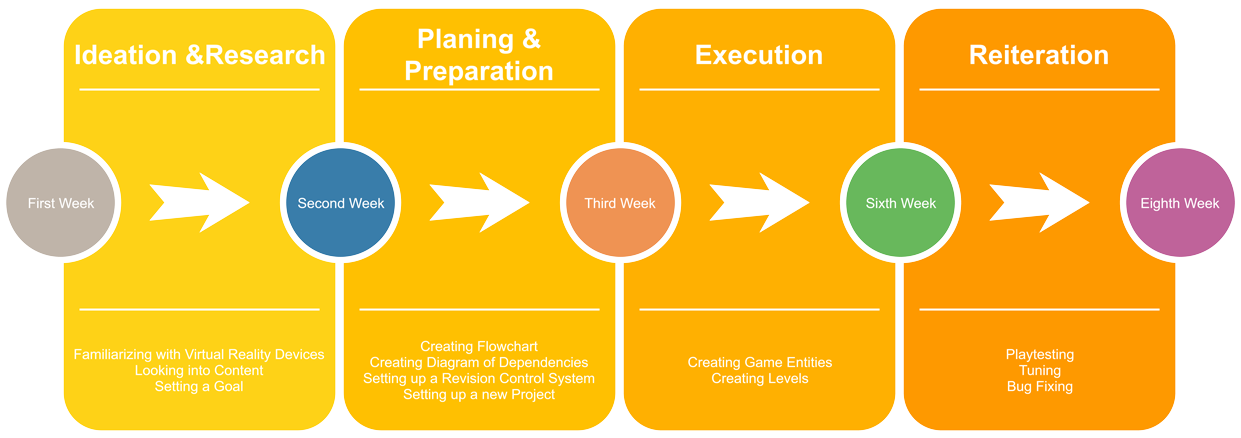

Timeline

Ideation

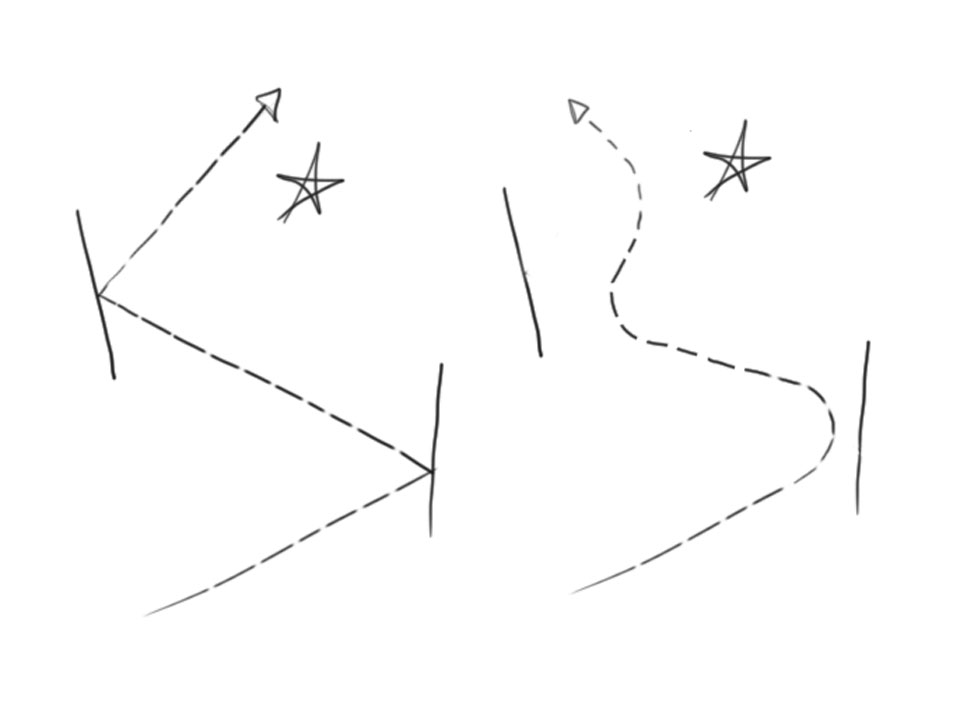

Conventional video games require the player to cognitively map specific action options to corresponding buttons or even button combinations. The use of motion controllers allows for an input option, that is more similar to the actions of everyday life. Simple tasks like reaching out for objects, grabbing and dropping them are very natural to perform. The simplicity and natural adaptation of the controls made virtual reality an enjoyable experience for everyone. It was important to me, to maintain this condition and to not end up with a hot wire game.

Throwing objects is interesting in many ways. In its core, it is a seemingly straightforward action, but it gets hard real quick if asked to throw an object consistently. I can throw a basketball, but can I hit the basket? Too many variables are contributing towards the outcome so that even professional basketball players are unable to predict their shot with 100% accuracy. Most of those variables, including angle and force, are also replicable in virtual reality. But I did not want to create a hit the basket simulator.

One of the great features of virtual reality is the possibility to set up conditions, which are difficult to be set up in the real world and let’s face it: gravity was always an annoyance in sports class.

Preparation

Game Loops

Game Loops

Game Loops

Dependencies

Dependencies

Dependencies

Execution

To make development most efficient, I carefully sketched out the dependencies of game objects. The graph to the right shows which entities are inheriting from where.

Review

Because of the focus on establishing a robust framework, adding more content in the form of extra stages became very easy. Due to the modular system, it is purely about arranging the layout, setting few triggers and adding it to the level array. This circumstance allows for more time being spent in actually designing levels rather than solving implementation problems. I believe that this fact is noticeable while playing my game. I can now already build a lot of more content by just using the currently available modules, but also adding new modules and features should be relatively easy.

Considering the amount of quality content I have been able to generate in this short amount of time, I would mark this project as a success.

>>This is just a slice. For my full documentation: Click HERE!<<Cubed

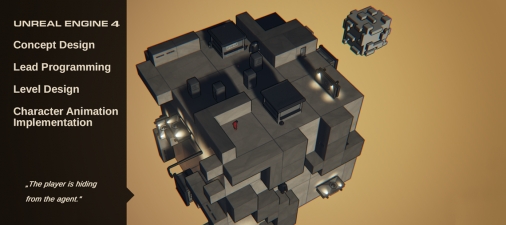

Cubed” is a partly procedurally generated, high score based stealth action game with puzzle elements.

PROJECT INFORMATION

Date: 3rd semester (2014/2015)

Duration: 5 months

Team Size: 7 people

Technology: Unreal Engine 4, Blueprints Visual Scripting

Constraints: Make a fully playable game using the Unreal Engine 4 and its Blueprint System. Use a modular

system with a maximum of 10 different objects.

Gameplay Video

Introduction

“Cubed” is representing my second team project within game development. Looking back at the first team project “Armville”, in which I have already gained a lot of experience, my expectations on this project were very high. Because I have assigned myself to a broad variety of tasks on the last project, I was able to widen my knowledge especially horizontally and find out which areas within the game development are in the interest of mine. Having this knowledge, enabled me this time to specialize and to go into more depth especially in regards to scripting and coding.

Concept

“Cubed” is a partly procedurally generated, high score based stealth action game with puzzle elements. Our project was strongly inspired by the Rubik’s Cube and is the result of an idea to combine the problem-solving aspect of puzzle games with the high tension of stealth action games. We wanted a game that offers the player a diverse gaming experience with puzzler, stealth and platformer elements. While the player progresses by coloring in the cube, the difficulty continually raises, making it nearly impossible to color in 100% of the cube. To reward highly skilled players without putting too much pressure on beginners speed is a small factor.

Cubed

Transparent materials

are coming in handy when debugging.

Transparent materials are coming in handy when debugging.

Mechanic

The unique selling point is arguably the integration of a fully functional Rubik’s Cube as the playing field of the game. The player can manipulate this cube within “the principles of Rubik’s Cubes”; meaning the player is being able to influence the environment in which her playable character is moving on. The player could, for example, use this feature to rotate obstacles out of the way. While the playable character can freely move along all surfaces of the cube, the gameplay can change drastically depending on which surface the playable character is currently active. While the top surface is being played as a game in top-down perspective, the side surfaces are being played as a game in side-scroller perspective.

Art Style

Especially in regards to the puzzle aspect of our game, it seemed essential to us, that the player would be able to keep the best overview of the entire cube. For that reason, we decided that the whole cube should fit onto the screen at any given time. This in return forces everything to be far away from the camera, thus appears smaller. Having this in mind, it only made sense to us to use simple shapes and strong outlines for a better overview. A cartoony and slightly over stylized art style is ideal for this purpose.

Cubed

The row selectors

are snapping to the cursor.

The row selectors are snapping to the cursor.

Solving the Rubik's Cube

This section gives a little insight on how I have realized the virtual Rubik's Cube.

Generating the Cube

To have a basic structure from where I could start working on the functionalities of the Rubik’s Cube, I decided to spawn the base of the Rubik’s Cube itself via code by using three “For Loops”, one for each dimension. The advantage of spawning the Rubik’s Cube via code, compared to the method of stacking the cubes together by hand, lies in the ability to customize it easily via parameters. Variations like the number of fragments of or the scale of each segment can be easily modified by changing some variables.

Cubed

Selected rows can be rotated

in either direction.

Selected rows can be rotated in either direction.

Making it rotate

Having the basic structure of the Rubik’s Cube, the next task was to find a solution on how to group up a row of cubes together and to rotate them. For that, I created two colliders with a custom material. The material allows the colliders to change their appearance dynamically. To make those colliders movable, I decided to create a cursor. The cursor is being spawned on top of the Rubik’s Cube. Each collider for rotating the cube is following the cursor along its one axis. To avoid misbehavior, I needed the colliders not to follow the cursor seamlessly, but to snap onto the grid of the Rubik’s Cube. Having the grid snapping done, I was then able to group all cubes, which are overlapping the collider, by attaching them to a common “Target Point” in the center of the Rubik’s Cube. The attached cubes can then be rotated around the “Target Point” and can finish their rotation by snapping onto a 90° angle.

Cubed

Easy modification of the cube

through changes in variables.

Easy modification of the cube through changes of variables.

Making Customization Easy

Because we had not defined our game mechanics yet and are still about to experiment and try to figure out what kind of game to design based on the Rubik’s Cube, I thought it would be handy to be able to customize and adjust the Rubik’s Cube in many different ways. To guarantee an easy and quick customization process, I have created a “Game Mode Blueprint”, which gathers all modifiable variables for better overview and easy access. I have then implemented further modification options for the Rubik’s Cube which are selectable and adjustable through this “Game Mode Blueprint”.

Cubed

Our playable character

does not like to live in a gray world.

Our playable character does not like to live in a gray world.

Making of Cubed

This section gives a little insight on how I have designed a game around the virtual Rubik's Cube.

Cubed

His mission is to color in the whole cube

without being caught by the agents.

His mission: Color in the cube without being caught by agents.

Designing a Game

...is about giving the user the chance to make her own decisions and therefore alter the progress and influence the outcome within a set of rules and constraints in a predictable but ungranted way. There has to be a way for the user to communicate with the application to be able to change its state. While there are several ways to design a communication interface, we decided to create a game, in which the user is communicating with the application by controlling a representative of herself.

Making Decisions

...are about evaluating dependent factors and predicting the result in regards to a preferred outcome. While there are different ways to promote desired outcomes, we decided to design our game around an apparent goal.

Cubed

The progress bar is time unrelated.

There is no need to hurry

to reach the 100%.

The score related to time, whereas the progress bar isn't.

Dual Scoring System

The goal is to color in as many faces as possible and with that to set a high score. We wanted the game to be competitive while remaining rewarding for casual players and therefore decided to implement two significantly different progression feedbacks. While some players will put more attention to the color progress bar, which shows the current overall percentage of painted faces, others may give more attention to the scoring system which is designed to be more competitive. The significant difference is, that the scoring system is related to playtime, while the color progress bar is not.

Cubed

Camera while on top.

Camera while on top.

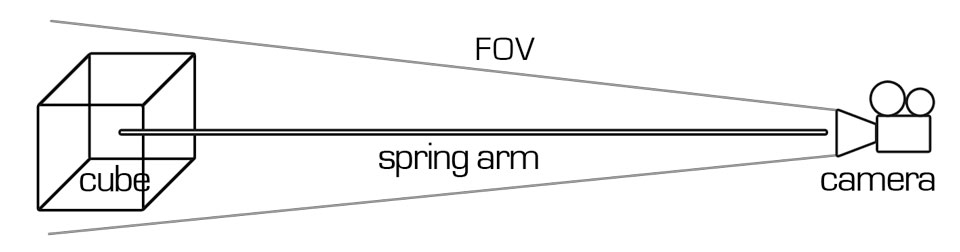

Making all Sides of the Cube Accessible

Because the playing field of our game is cube-shaped and we did not want to limit the player's movement to the top surface, we had to think of a solution for the transition from one surface to another. The first step to make all sides of the cube useable was to implement a camera system, which provides the best overview over all sides of the cube. To do that, I placed a rotation point in the center of the cube and attached a spring arm which holds a camera. The camera is set up to face towards the center point. Depending on the player's position in world space, the spring arm is set to interpolate to a specified angle.

Cubed

Camera while on sides.

Camera while on sides.

As soon as the player leaves the top surface, the spring arm will automatically orientate itself orthogonally to the corresponding side surface. In addition to that, the length of the spring arm will be extended, resulting in a higher distance from the camera to the cube. While the range changes, the cameras field of view is being adjusted accordingly, which allows for smooth transition between perspective and orthogonal camera projection mode.

Cubed

Double jump is handy.

Double jump is handy.

To give the player some control over the camera, I added the ability to rotate the camera around the cube by changing the yaw value of the rotation point and the ability to tilt the camera up and down by changing the pitch value of the rotation point. I wanted to give the unique shape of our playing field more relevance and make the game feel different after the transition from top surface to side surfaces. The idea was to give the game the feeling of an isometric top-down game when on the top surface while changing it to be a platformer when on the side surfaces.

To emphasize the difference of the movement on the top surface from the movement of the side surfaces, I lowered the height of the jump when on top and raised it when being on the sides. Furthermore, the movement is being locked to be planar while being on the sides.

Thinking around Corners

Cubed

Jumping around corners.

Jumping around corners.

While it is nearly always possible to make a transition from the top surface down to the side surfaces, the transition back up is not granted. To compensate for this issue, I implemented the transition from one side surface to another and the ability to change gravity. Instead of changing the direction of the gravity, I achieved the illusion of a gravity change by flipping everything instantly by 90°.

To make the playable character being able to jump around corners the game needs to understand when the player wants to make a transition. The position of the playable character is being tracked continuously and saved into a variable. As soon a corner area is being entered, the application reads out the last position of the character and assumes, that a transition to the adjacent side is desired. At this moment the game slows down and readjust camera and controls.

Designing Tiles

Cubed

Designing the tiles.

Designing the tiles.

The tiles which are shuffled up and placed around the cube had to be designed to provide exciting shapes and hiding spots on the top surface, as well as providing platforms and obstacles for the side. To make sure, that the tiles will be equally distributed around the cube, I was setting up an array containing each tile once. This array is being shuffled up, and the tiles are being laid out accordingly for each side of the cube.

Painting the Assets

Cubed

Painting Assets.

Painting Assets.

To be able to color in any face of the cube and any face of any asset, the playable character does not only need the ability to distinguish between the assets themselves but also the ability to differentiate between one face from another of the same asset. To gain information about which face the player is meeting I had to use meshes with predefined face IDs. Those could then be assigned to different physical materials.

Designing Behavior

Cubed

the behavior tree

the behavior tree

Because our game is partly about sneaking and hiding, we wanted to include an enemy with a field of view and ranged sense of hearing noisy activity. Since our game is to a certain extend procedurally generated the challenge was to design an enemy, which would work with any arrangement of the tiles.

The enemy is using an environment query system which lets him break out of his waypoints and check on potential hiding spots. He is preferably checking positions, which are blocked from his view and are near to known suspicious locations. The playable character is dropping paint while running, which can be tracked by the enemy. The enemy will aim at the playable character on sight and charge his taser for an electric shot.

Recap

Cubed

It was important

to experiment with the cube.

Experimenting with the cube was important.

I was extremely motivated and started to prototype with the Unreal Engine 4 from the very beginning. Although most of the early scripts are not in use anymore, they still served as a useful exercise and helped me a lot to get into the new engine. The transition from Unity 4 to the Unreal Engine 4 went surprisingly smooth, and I was able to gain more scripting knowledge than I initially thought I would.

I already felt quite satisfied working with Unity 4. Seeing me being even more confident while working with the Unreal Engine 4 underlines the success of this project and my goal in transferring and expanding my knowledge. After spending over 300 hours scripting, I am relieved to know, that I am still very interested in programming. I realized though that I have to be careful not to be overambitious in future projects. After having two very intense projects, in which my primary task was to take in as much information and gain as much knowledge as possible, I see the urge to learn how to improve my work-life balance for future projects.

Although I was not able to set a specific goal and milestone from the very start, I feel like I have surpassed my expectations by far. I am satisfied with the result as well as the progress, and the experience I have been gaining through this project. Since I was able to find a solution for every scripting problem I was encountering, I believe, that I have improved my scripting skills tremendously. Looking back at my expectations, personal goals and milestones, I would mark this project as a great success. I made a great leap forward in understanding programming and game engines.

>>This is just a slice. For more details: Part 1 , Part 2 , Part 3 , Part 4 <<

Dominion

“Dominion” is a strategic top-down local multiplayer battle arena.

PROJECT INFORMATION

Date: 5th semester (2015/2016)

Duration: 5 months

Team Size: 3 people

Technology: Unreal Engine 4, Blueprint Visual Scripting

Constraints: Develop a fully playable prototype with the focus on AI.

Gameplay Video

Introduction

Dominion

“Lemmings“ by DMA Design

(Rockstar North) in 1991

“Lemmings“ by DMA Design (Rockstar North) in 1991

Our task was to create a game within a three month period, with each team member spending 600 working hours on this project and an estimated 1800 working hours in total. This project marks to be the last and greatest group project of our game design studies. Having this in mind; we were able to stretch our scope a little bit further than in previous projects. The topic for this group project is: “Agents”.

Concept

Dominion

“SimCity“

by game designer Will Wright in 1989

“SimCity“ by game designer Will Wright in 1989

The focus of this project was to design a game with an emphasis on artificial intelligence. An agent represents an entity, which can operate by itself without constant player input. It can be a representation of an actual visible character, like for example in games like “Lemmings”. It can also be visual less apparent while managing the whole economic system of a game, like for example in games as “Sim City”. Agents are game entities which are operating as independent individuals or as a group, like for example as in the flocking behavior of ants.

Dominion

ants using flocking behavior

to bridge a gap in real life

ants using flocking behavior to bridge a gap in real life

One of the main advantages of agent-based systems is that the player can maneuver a high amount of units without the need of steering each unit individually all the time, like for example in Real Time Strategy games such as “StarCraft”. We decided on a system in which the player has to handle a small number of units. The units would have individual characteristics, behavior and a somewhat sophisticated artificial intelligence system. Each unit would have a unique personality.

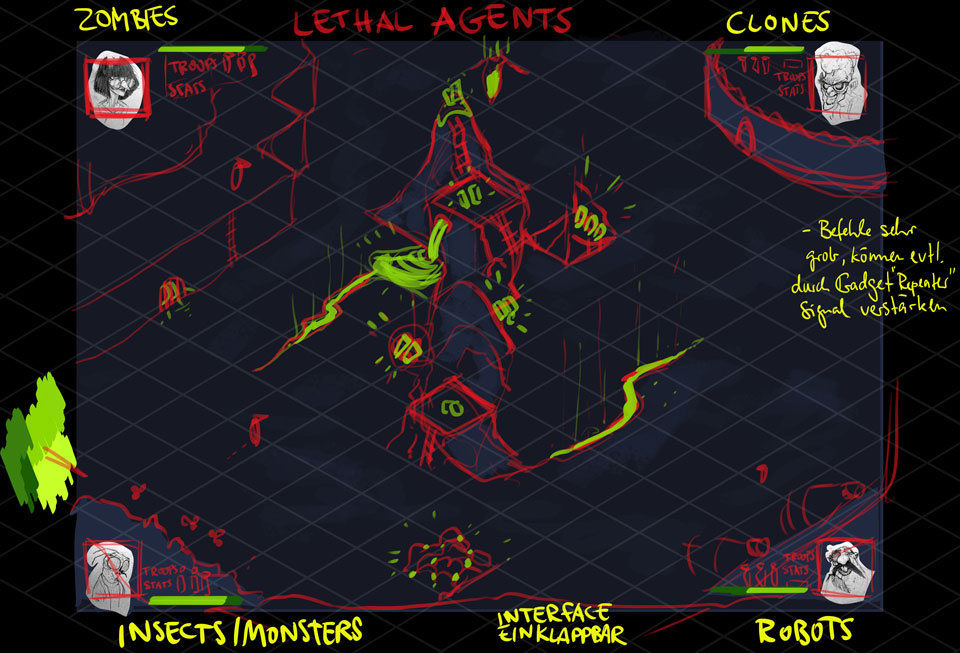

Initial Idea

Dominion

Concept sketch by Jeremia.

Concept sketch by Jeremia.

Our game was aimed to be a multiplayer local battle arena, in which each player is in charge of a little squad. Each squad member would have its unique statistics and therefore unique strengths and weaknesses. This again would ultimately influence their decision making, thus their behavior. The player would not be able to steer the characters directly. Instead, the player would be able to set the statistics, the class and assign tasks to each squad member, which will determine their behavior.

There would be two classes: workers and fighters. A worker could be given tasks like scavenging for resources or building expansions. Fighters, on the other hand, could be given assignments like looking out for enemies and to attack them or to defend a specific area. The player would be able to change the assigned tasks of a squad member whenever needed, but the squad member would be forced to return to the base or expansion whenever a change of class is required. Meaning, that changing tasks within a class would be free of expenses, but changing the class of a unit would be uneconomical due to the required time to return to the base.

There would be several ways to win the game. A player could win by being the first to collect 3 out of 5 targets, a player could outplay the other players by being the first to reach the target amount of resources, or a player could be the last surviving race by destroying all enemy bases while maintaining the own base intact. Setting our game up as a local multiplayer was essential to us because we wanted to add a diplomacy system to the game mechanics. We hope this diplomacy system would leverage the player's social interaction on the metagame layer.

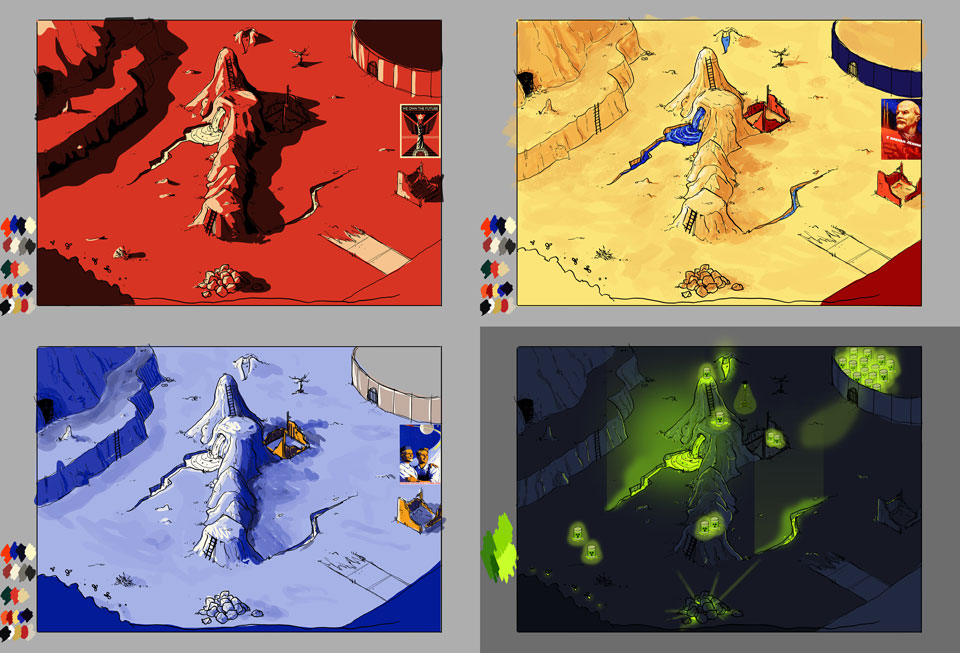

Dominion

Color schemes by Jeremia.

Color schemes by Jeremia.

Art Style

We decided to develop a game which could be played against other players locally on one shared screen. The advantages of a shared screen are the excellent overview of the whole map, the full usage of the entire screen for every player and the convenient and comfortable set up for gaming sessions and presentations. We were eager to develop a fun and also good looking game with beautiful assets and animations. One of the main challenges was to find an art style, which would not only look good at full HD resolution but would also provide all four players an excellent overview over the action on one single shared screen.

Dominion

Character Design by Jeremia.

Character Design by Jeremia.

Background Story

The background story of the game is about four scientist, who went insane because of their dedication to science. These mad scientist, are now creating their little army of minions to conquer the world.

Dr. ‘Dolly’ Dolbert, who created unsatisfied clones of himself.

Dr. Vicki Frankensteiner, who tried to keep people alive for too long.

Dr. Robert Asimov, who did not see any harm in researching robotics.

Lady Bugoni, who studied bugs and experimented with cross-species.

My Task Area

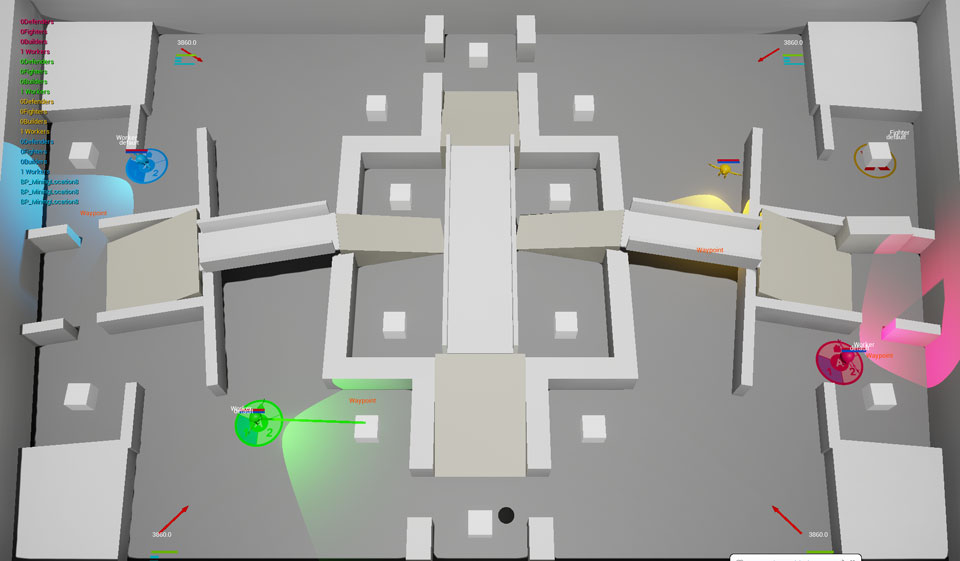

Dominion

first prototype with the level generator

first prototype with the level generator

After our team has agreed on the topic “Agents”, we decided that it would be most efficient, if everybody is developing multiple gameplay concepts by her/himself. We would then have a pool of thoughts and ideas, which we could share, combine or redefine.

After having defined a gameplay concept, my task as the only programmer was to start prototyping. I realized that I would need a consistent level, which I began to develop and to block out in the engine with brushes, in order to be able to test the AI. I was then programming the units and a cursor as an interface to select and interact with the units. To be able to spawn those units, I was creating an object which would represent the base. I created several items for the AI to interact with. Having all this set up, the next big task was to implement the units' behavior. Things like the implementation of GUI and animation had to be done as well.

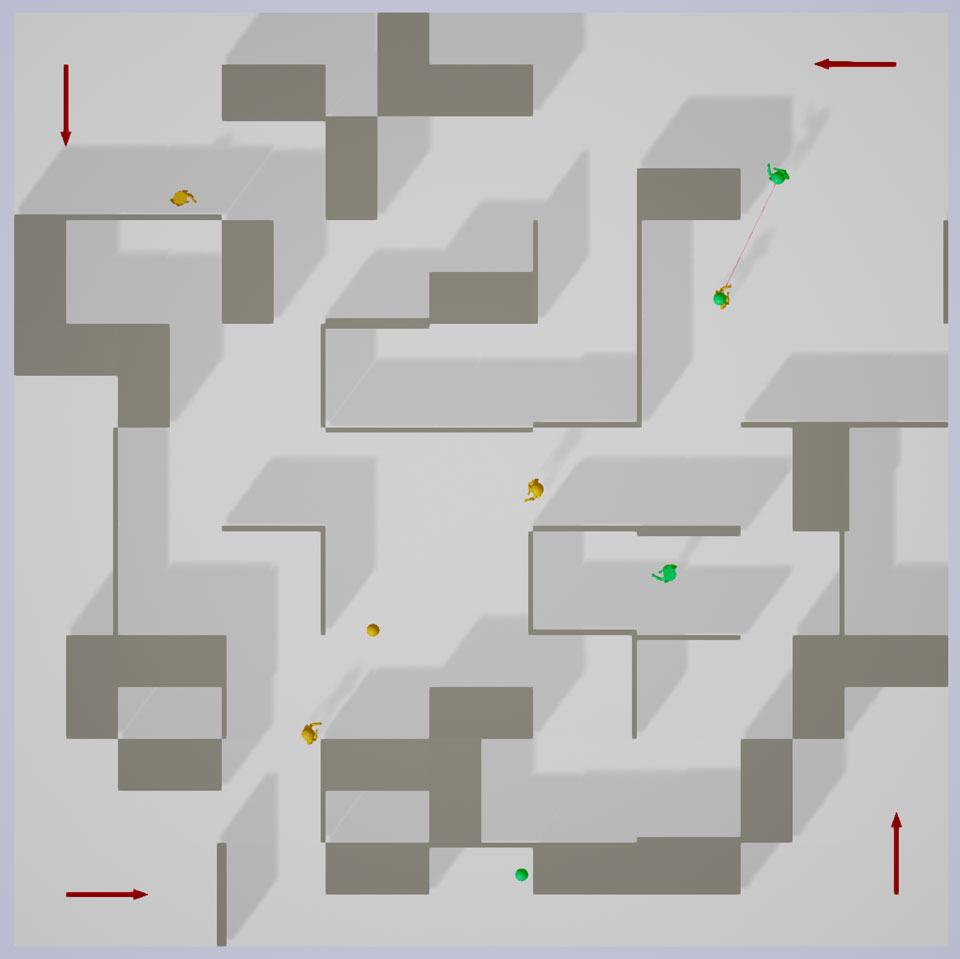

Prototyping

Dominion

comprehensible

vs.

not comprehensible

comprehensible vs. not comprehensible

A game concept which heavily relies on artificial intelligence was new to us, and it seemed very important to have a running prototype as soon as possible. We needed to figure out, if and what makes a game fun, in which the player’s main activity is watching, not interacting. Besides, I was very unfamiliar with AI systems and needed to understand its capabilities as fast as possible.

I started with a level generator, which would stack random rooms and corridors together. The idea was to confront and test the AI on many different level layouts. I realized that it was too complicated to observe and understand behavior in ever-changing environments. That is why I abandoned the level generator and started blocking out a new persistent level.

Dominion

an early sketch of the Level Design

an early sketch of the Level Design

Making Changes to the Concept

If the behavior is following a simple ruleset, the player will not get disappointed as long as the behavior is consistent. The player is able to comprehend the condition, which led to specific actions in certain situations. If the patterns are too complicated, the player might not be able to follow how decisions are made. The behavior should always be readable and considered as reasonable in any situation.

Dominion

the redefined version of the first level

the redefined version of the first level

Our game did not deliver on that. Behavior was wildly interconnected with the statistics of a unit and it became challenging to comprehend how decisions were made. Since the unit’s behavior was unpredictable, the actual behavior often did not match up with the expected behavior, and the player was getting unsatisfied. To minimize situations, in which the player can get unsatisfied, we implemented the feature, which allowed players to assign tasks to units directly so that the player could overwrite the units decision makings.

Level Design

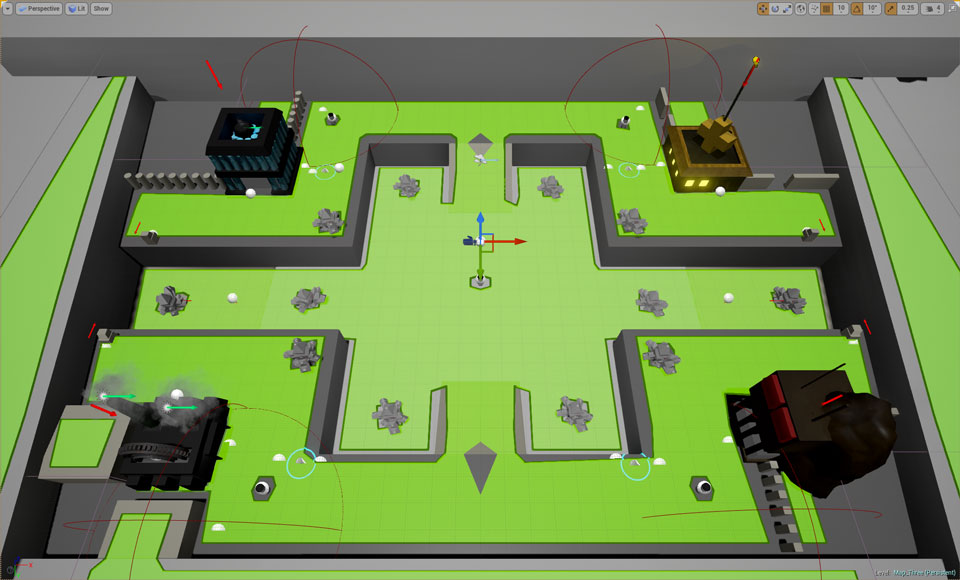

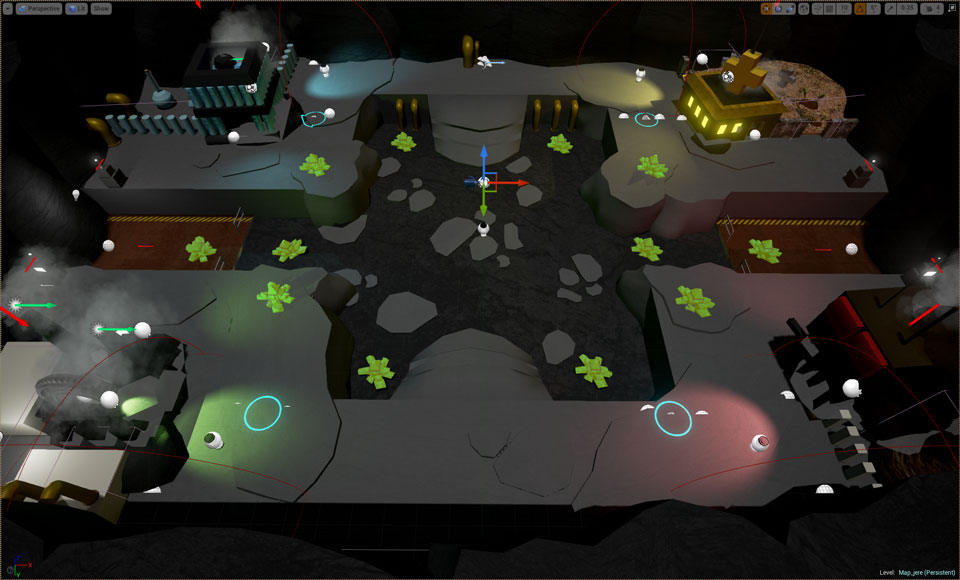

Dominion

blocked out a new level layout

blocked out the new level layout

I started to sketch out a level on paper and used this sketch to block out the level in the game engine. It gave me the opportunity to test the behavior in a consistent environment and helped Jeremia in developing the visual appearance of the environment. Blocked out areas got decorated with his Assets. The plan was to combine a consistent layout of the map with a little bit of randomness for the target placements etc., to raise the replayability.

Because we were aiming for a shared screen game, every bit of space had to be used wisely. Even the placement of thick solid walls would result in unused space. Using thin planes to divide space would not look right. A solution was to handle differences in heights to separate areas from each other.

Scaling Down

Dominion

new level layout after adding assets

new level layout after adding assets

The level design part was a huge task to be tackled. To fit the whole map into one single screen, we had to scale everything down. We were not able to predict whether we would be able to fit everything into one single screen in a reasonable manner and whether this concept would work at all. Furthermore, it was quite difficult to predict, what kind of level design would work. It was necessary to try out a lot of features and to keep the good ones and to throw out the bad ones. The shared screen concept in combination with the 1K HD resolution was representing a though constraint, and I am glad, that we figured out a solution. We also experimented with different angles of projection. One idea was to develop this game as a table surface game. We dismissed this idea because it did not provide enough benefits compared to the rather complicated setup.

Artificial Intelligence

Dominion

my interpretation of the AI architecture

my interpretation of the AI architecture

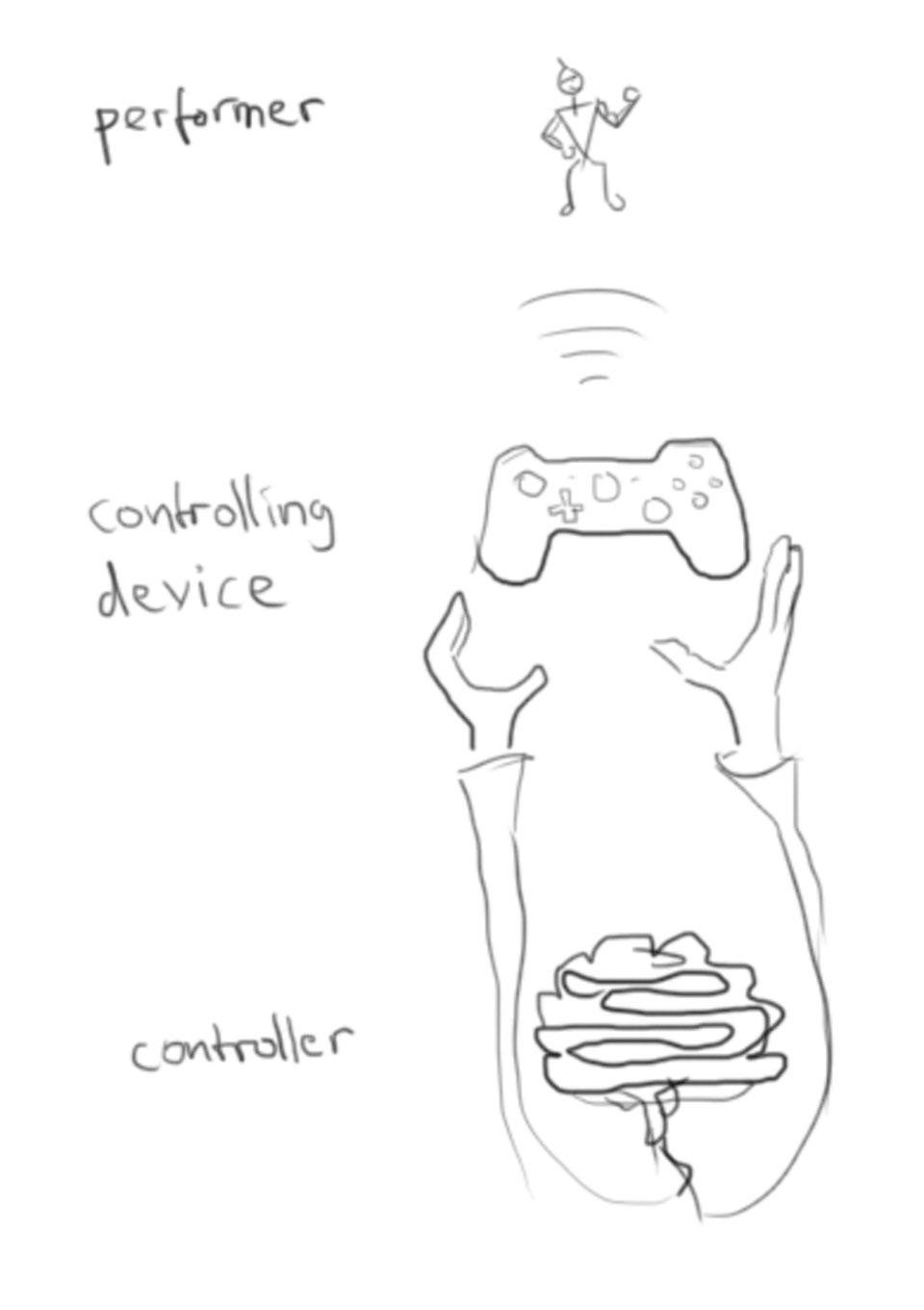

It is crucial to differentiate between: what is performing the action, what is controlling the performer and through which controlling device is the performer being controlled. The performer could be the representative game entity, the avatar for example. The controller could be a human being controlling this avatar through an input device, but also a non-human system, like for example an AI could be the controller.

This architecture comes handy, whenever the controller wants to change its performer. Racing games, where the player can choose from different car models, is a good example. Even more so, this allows AI to control the same type of performer in the same manner as humans would. Empty slots can be filled up with bots, as an example.

Behavior

After having set up the meshes for the units, I now needed to feed them with behavior. For that, I needed to set up an AI Controller and a Behavior Tree with all its Services, Decorators, and Tasks. According to my previous example, the representative body including the mesh would be the “performer”, while the behavior tree would be the “controller”, and the AI controller would be the “controlling device“.

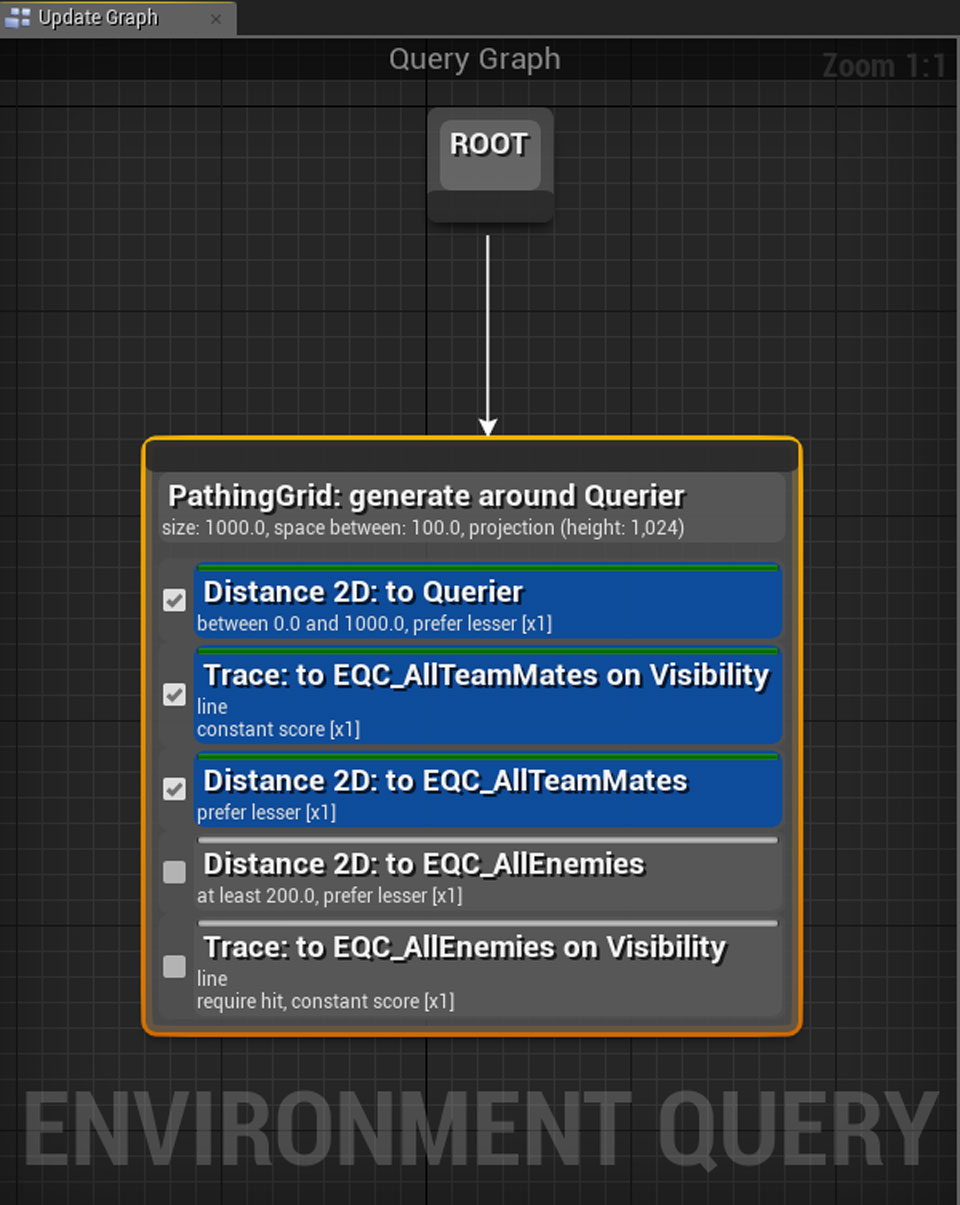

Dominion

Setting up an Environment Query.

Setting up an Environment Query.

The sensing component enables an AI to sense his environment through vision or hearing. Unlike with human players, the AI does not look at the screen but instead senses the surroundings out of the bodies perspective. Nonetheless, the sensing component is part of the AI controller. The AI Controller does not only possess the body and runs the behavior tree on it, but also contains the sensing component. With this sensing component, the AI can sense specific objects in its surroundings. In this case, the sensing component can detect all the intractable game objects. Every sensed object is being categorized and analyzed to figure out, whether it is relevant and should influence the behavior.

In this example a worker is sensing a fighter:

--> is this fighter one of ours?

--> is this fighter already occupied with something?

--> am I already threatened by another fighter and if so, is this other fighter closer to me than the sensed one?

If any of these checks are being answered with yes, then the sensed fighter will be ignored. If all of them are being answered with no, then the detected fighter is an enemy fighter, which has no other occupation right now and is closer than any other threatening opponent. This relevant fighter information will then be sent to the behavior tree and saved into a variable. Those variables, which are being set by the sensing component in combination with variables which are set by the player, when assigning tasks, are forming different combinations, which can result in different outcomes for the behavior.

Dominion

Environment Query System with scores

Environment Query System with scores

In this example we have a fighter with different conditions set up for entering a specific behavior:

fighter sensed relevant enemy worker --> go to enemy worker

fighter sensed relevant enemy fighter --> go to enemy fighter

fighter is low on health --> hide

Having these conditions and behaviors ready, we can now set up the priorities for each branch. In this example, the last condition would have the highest priority, whereas the second last condition has the second highest priority. Having these priorities setup will let the behavior tree decide which behavior is more important. In our example: If the fighter senses an enemy worker and an enemy fighter at the same time, he will approach the enemy fighter, due to its higher priority. In any case, if he is low on health, he would flee, since it has top priority.

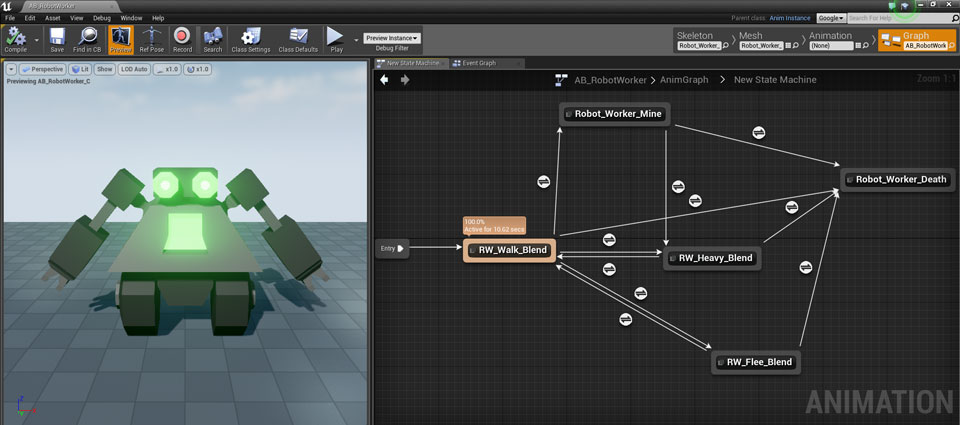

Animation

Dominion

a worker units State Machine

a worker units State Machine

Another Task of mine was to implement the animation assets into our game. That involved setting up the Animation Blueprints and Animation Blendtrees. An animation is essential to give the player visual feedback about the state of the game. It is necessary to communicate and visualize the current activity of the units.

To guarantee a smooth transition from idling to running, I was setting up one-dimensional blend spaces with loopable animation assets of idling, walking and running. Depending on the velocity of the unit it would perform a scaleless blend from idle to walk and from walk to run. Because sudden changes in the velocity were causing little hiccups while blending, I was feeding the blend spaces with an interpolated value of the velocity.

Dominion

Montage Blueprint of a fighter

Montage Blueprint of a fighter

A State Machine regulates the timing of the transition from one animation state to another. It observes whether predefined conditions for a change are matched and performs the transition from one state to another if necessary. To get the fighter units to play the walk and the attack animation at the same time, I had to setup Montage Blueprints. These Montages can be fired and stopped via Blueprint and stitched onto an active pose, beginning from a dedicated bone. This way, the fighters final pose can receive the locomotion pose of the state machine while performing the attack animation for the upper body.

Recap

Dominion

testing our game as a surface app

testing our game as a surface app

Compared to previous projects, this turned out to be the most complicated one so far. I have tremendously underestimated the workload of complex AI systems. It took me a lot of time to get used to the AI components of Unreal Engine 4, and I needed numerous iteration steps. Nonetheless, I have the feeling, that I have learned a lot about game AI and that I have a much better understanding of it now. I think that this knowledge will come very handy for future projects.

The concept of the game has changed a lot during development. Features had to be replaced or removed. One of the significant changes was the introduction of the assignable tasks. I think that only through these several iteration processes it was possible to get into that final stage. I have to learn to set the scope right next time, to get my work/life balance right. The crunch time was getting very tense, and I want to avoid that for future projects.

In overall, I am satisfied with the outcome of this project. The game is fun and helped me a lot to understand the basics of game AI.

>>This is just a slice. For my full documentation: Click HERE!<<Odd One Out

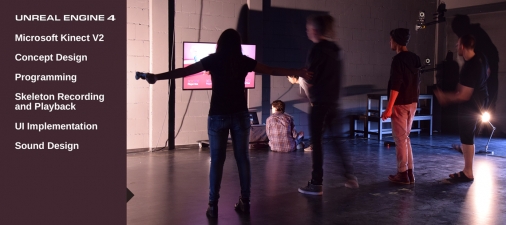

“Odd One Out - humans not allowed” is a multiplayer party game.

PROJECT INFORMATION

Date: 4th semester (2015)

Duration: 3 weeks

Team Size: 3 people

Technology: Unreal Engine 4, Blueprint Visual Scripting, Microsoft Kinect V2

Constraints: Develop a fully playable prototype on the topic: Full Body Game.

Gameplay Video

Introduction

Odd One Out

Character Sketches by Betti.

Character Sketches by Betti.

Unlike previous projects, which have taken place throughout the whole semester, this project is an experimental game jam with the duration of three weeks. Not only the time has been cut down by a significant amount, but also the size of the group has been halved from around six to three members per group. The scope of this project is strongly limited compared to previous projects and our schedule had to be planned more carefully than ever.

The topic for our group project is: “Full Body Game”.

Concept

Odd One Out

Character Sketches by Betti.

Character Sketches by Betti.

Looking at existing Kinect games, we were not able to find one single game, which would meet our expectations regarding: “making good use of body tracking”. Our mission was, to work out a game concept, which would make use of the body tracking technology in a necessary way. We did not want to create another Kinect game, in which the core mechanic is just about mimicking specific gestures to trigger specific events, which could also be triggered by any other input system like for example a gamepad.

Our game, “Odd One Out No Humans Allowed”, is a four-player party game, in which the players have to disguise themselves as nonplayable characters (NPC). The motion of each player is being tracked by the program and projected onto one of the ten characters on screen. The remaining six characters are computer controlled NPCs. The goal is to identify the avatars of the other players and kick them out of the game.

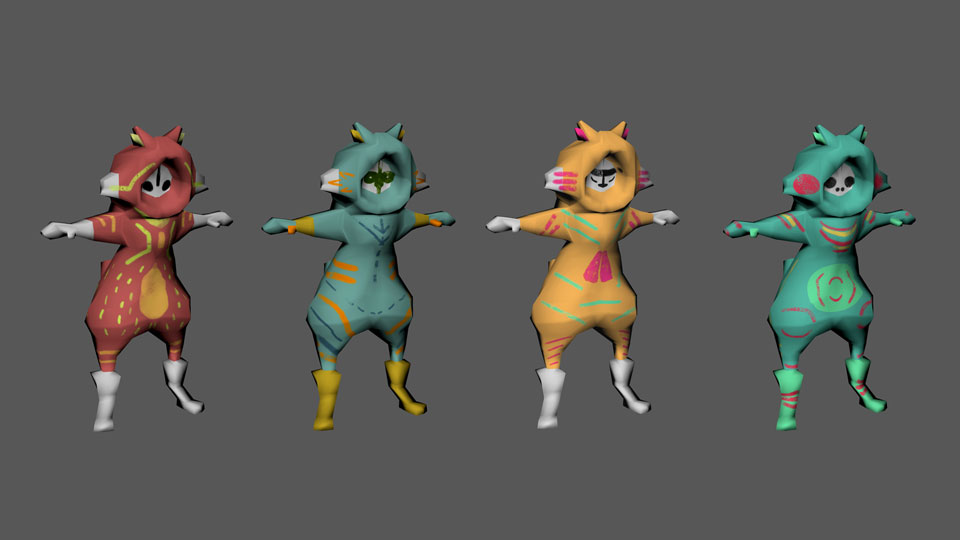

Odd One Out

Character Color Schemes by Betti.

Character Color Schemes by Betti.

Game Mechanics

As soon as the players are lining up in front of the Kinect sensor, the game starts automatically. Each player gets a random character which is mimicking the body motion. The players are now trying to identify themselves and the other players. Once an opponent player is identified, they can be excluded from the game. But beware: the one who tries to exclude an NPC will lose the ability to exclude any other player. Being unable to mark other characters, this player's chances to win the game are close to zero. If everyone was getting fooled into selecting NPCs, the game will end as a draw. The player who manages to be the last survivor by kicking out all other players will win the game.

Odd One Out

Finalized Characters by Betti.

Finalized Characters by Betti.

Art Style

It was important to us, to find a setting, in which we could easily design very distinct characters. We chose a theme with animal-like creatures. The initial plan was to create ten different character meshes. Each of them would have traits of a specific animal, which would make them easy to be recognized. Due to the time restriction, we had to cut it down to one mesh with ten different diffuse maps. The overall art style stayed clean and straightforward.

My Task Area

Odd One Out

rough timetable

rough timetable

Being the only programmer, I had free choice over which game engine to choose. Since the last project was created with the Unreal Engine 4, I felt most comfortable with it. The timetable served as a rough sketch up of the three weeks. It was difficult to estimate how long a task would take because I have never worked with the Kinect before.

Understanding Mircosoft’s Kinect V2.0

Odd One Out

Testing the capabilities of the Kinect

in combination with the SDK.

Testing the capabilities of the Kinect in combination with the SDK.

First, out of all steps, it was essential to understand how the Kinect works and what its capabilities are. I started off by installing the Kinect SDK 2.0 and had a look at its performance in detecting human bodies and calculating the bones. The SDK is not only providing the position and orientation of the human bones but is also capable of producing a depth map of the camera feed at a rate of 30 frames per second. But it was apparent, that the software development kit had problems in calculating bones, which were out of sight (e.g.: leg hidden behind the other leg), which would result in weird body twitching. Best results were achieved from a frontal perspective, which would expose all limbs most clearly to the camera. Body tracking from the side or back view did not work very well. We also tried a top-down view (hanging the Kinect to the sealing), which did not work at all.

Recording Movement

Odd One Out

Dancing avatars:

4 of them are mimicking the players.

Dancing avatars: 4 of them are mimicking the players.

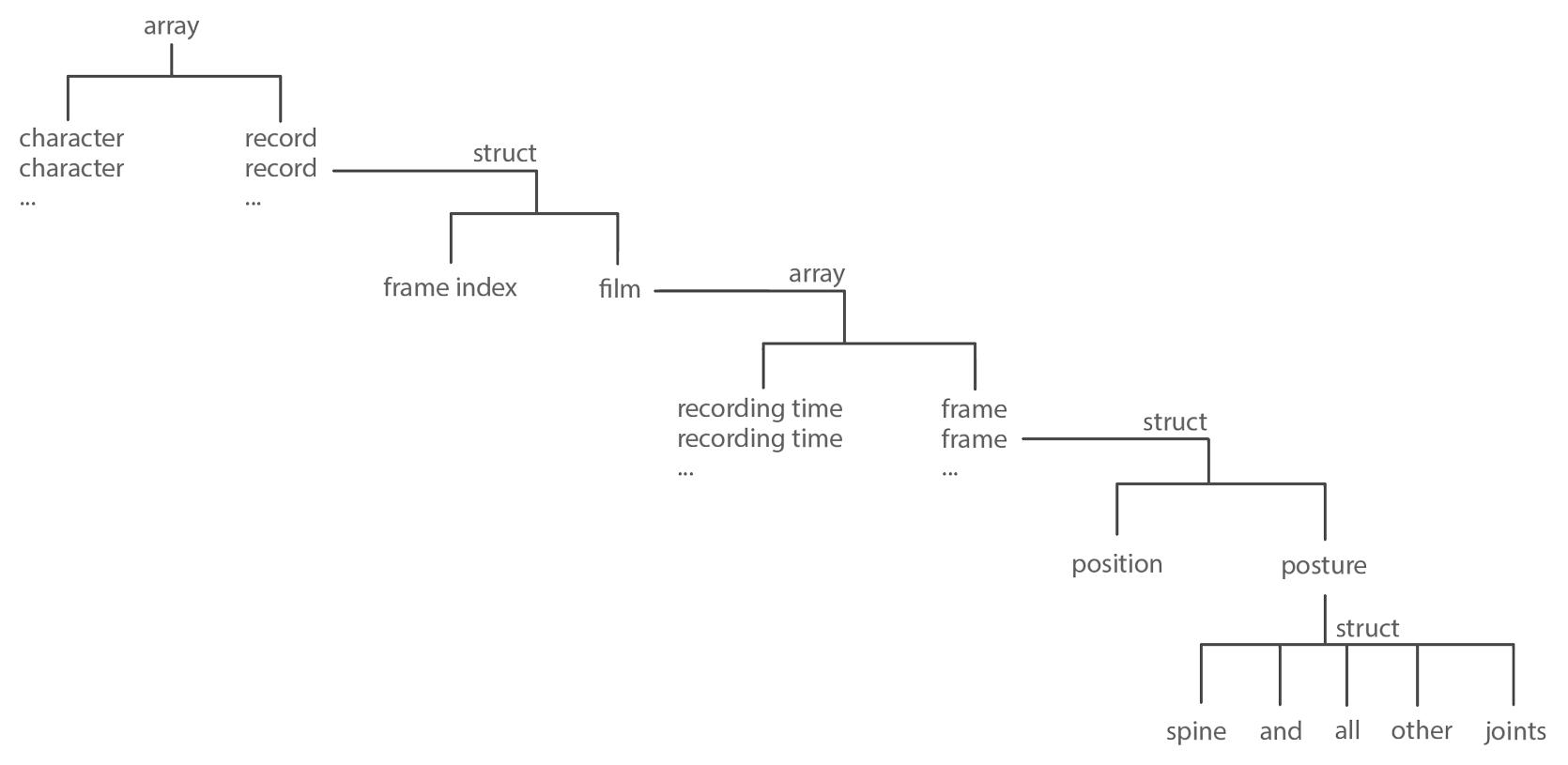

The idea was to record real human movements while they are playing and feed these data to NPCs in later games to achieve very natural body movements for the computer. This task was a particularly difficult one for me and took me by far the longest to achieve. To manage all the information for a record and playback management system I needed to structure the data very carefully.

There is an array containing each character. Each character is getting a record. This record is a struct containing a frame index and the actual film. The film is an array with the recording time for each saved frame. One single saved frame is a struct holding the information of the position and the posture of the skeleton. And finally, one posture is a struct containing all the orientation data of all joints, which together are defining the posture of the character.

Odd One Out

structure of the data

for the recording and playback function

structure of the data for the recording and playback function

While saving a record of a character the program is filling the array called film with frames and the according to the recording time of the frame. The frame index of the record is ignored, for now, it will be used for later playback. The recording time is required to make the playback later independent from the frame rate. One saved frame saves the position and posture of the character in this actual frame of the game. And finally, one saved posture contains the orientation of all joints, which are forming the overall posture of the character.

Odd One Out

Playtest at our Studio.

Playtest at our Studio.

When playing the record on an NPC, all the data has to be fed to the animation blueprint of the NPC. In this case, it follows the same principle; just instead of saving, it reads out the film. The frame index starts at 0. Each in-game frame the program is looking into the array called film and starts at the given frame index. It then compares the recording time of the frame at the frame index with the actual game time. If the recording time of the frame is lower than the actual game time, the program will skip the saved frame and set the frame index one integer higher until it reaches the point, where the recording time is higher than the actual game time. At this point, the program starts to dig into the saved frame for information about the position and posture, which is then fed to the animation blueprint of the character.

Odd One Out

Designing the sound.

Designing the sound.

Sound Design/Implementation

I used Ableton Live to create the soundtrack. I wanted to give the soundtrack some childish character, which is a little bit off the beat sometimes. I wanted the sound to be somewhere between of a wooden xylophone and a ukulele. For feedback sounds for the cursors, I took notes within the harmony of the soundtrack. I choose a cymbal crash for kicking out another player and a loud off tune noise for trying to kick out a wrong character.

Considering, that I have only spent about 5 hours into designing the feedback sounds and the soundtrack, I am quite satisfied with the result. The music fits the art style and is not irritating after several loops.

Recap

Odd One Out

our workspace (Betti and Anna)

our workspace (Betti and Anna)

Looking back at this project, I would mark it as a great success. I made a great leap forward in programming while maintaining a reasonable work-life balance. Especially the recording function forced me to keep my code tidy and structured. I have also gained a lot of new knowledge in programming by using structures and enums. The sound design part went well, although I was worried about the brief time in the end. The efficiency in sound design made me even more confident for future projects.

There are two things, which did not fit into the scope of a three-week project: the proper implementation of voice recognition and the implementation of a Kinect interface without any third party software. I am planning to look into that sooner or later.

Overall, I am pleased with the result of the project and am very satisfied with my performance. I am looking forward to the next project!

>>This is just a slice. For my full documentation: Click HERE!<<About Me

When I am not dancing Lindy hop or playing video games, I enjoy creating stuff and educating myself.

I have designed soundtracks for my games and have been playing the guitar in a band.

Please, feel free to browse through some of my hobby projects.